NAIC AI Systems Evaluation Tool

Stay ahead in support AI

Get our newest articles and field notes on autonomous support.

Here's Why That Should Change How You Buy.

Letters are landing on desks at insurance companies across twelve states right now. Not the routine kind. These are structured requests from domiciliary regulators asking insurers to lay bare how they use artificial intelligence, what governance sits around those systems, and whether anyone can actually trace a straight line from an AI decision back to the data and rules that produced it.

The NAIC's AI Systems Evaluation Tool pilot went live in March 2026. It runs through September. Formal nationwide adoption is expected at the fall meeting in November. State regulators are, for the first time, collectively examining how insurers deploy AI in production environments. Not theoretically. Not through position papers. Through a structured evaluation framework that feeds directly into future market conduct exams, financial analysis, and risk assessments.

If you run AI agents for customer support, claims intake, or policy servicing, the conversation has shifted. It's no longer about whether your AI resolves tickets. It's about whether you can show a regulator exactly how it resolves them.

What the NAIC AI systems evaluation tool covers

The framework breaks into four exhibits. Each one pulls at a different thread of how an insurer operates AI.

Exhibit A: Where is AI running?

This is the scoping question. Regulators want to know every place AI touches the organization. Underwriting. Pricing. Claims. Customer service. Fraud detection. Billing. They also want to know whether each system was built internally, purchased from a vendor, or some combination. If your inventory has gaps here, the rest of the conversation gets uncomfortable fast.

Exhibit B: Is governance real or theoretical?

Does the company have a written AI policy? Is the board involved? What risk framework governs how these systems get developed, tested, deployed, and monitored? Insurers can respond through a narrative or a structured checklist. The format matters less than the substance. Regulators want evidence that governance lives in the organization's daily operations, not in a PDF that no one references after onboarding.

Exhibit C: What happens inside high-risk systems?

This exhibit draws the heaviest scrutiny. It covers claims automation, policyholder servicing agents, underwriting triage, and anything where AI touches a decision that directly affects a consumer. How does the system decide? What controls catch errors? What happens when an edge case shows up that the model wasn't explicitly designed for?

Exhibit D: Where does the data come from?

Lineage. Quality checks. Sources. Validation. Regulators are looking specifically for data elements that could produce unfair outcomes, including proxies for race or ethnicity, social media signals, and aerial imagery used without human verification. If you can't explain where your training data originates and how it's governed, Exhibit D becomes a problem.

The proportionality principle

Regulators aren't treating all AI equally. They're applying proportionality, which means high-risk, consumer-facing systems get the deep examination while low-risk back-office automation gets a lighter touch. That puts AI agents handling policyholder interactions directly in the spotlight. Claims status inquiries, endorsement processing, billing disputes, certificate of insurance issuance. If your AI touches a policyholder, regulators want to understand it.

California, Colorado, Connecticut, Florida, Iowa, Louisiana, Maryland, Pennsylvania, Rhode Island, Vermont, Virginia, and Wisconsin are the twelve participating states. Each one is selecting between one and more than ten insurers for the pilot, spanning property/casualty, life, and health lines. The NAIC isn't disclosing which companies are being evaluated.

Why most AI agents for insurance aren’t ready for this

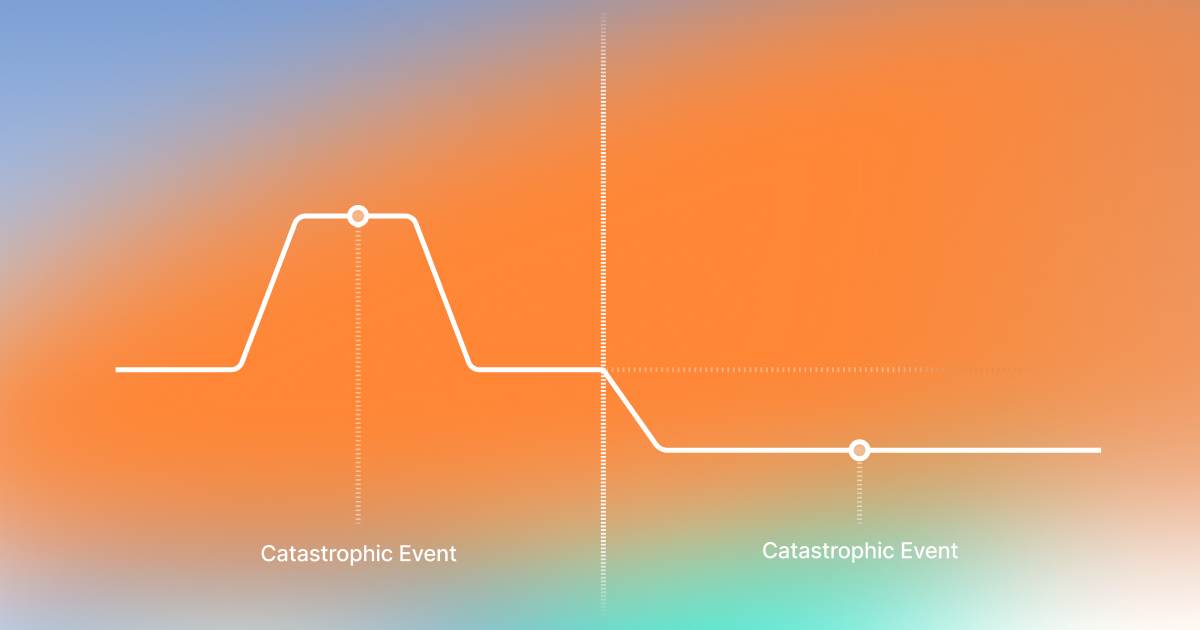

The problem deserves a direct conversation. The majority of AI deployed in insurance customer support was built to hit operational metrics. Containment rates. Deflection percentages. Average handle time. Those numbers justified the investment, and the operational pressure that drove adoption was genuine.

But regulators don't care about your containment rate. They care about whether a company can demonstrate that its AI follows defined policies, produces traceable decisions, and operates within a documented governance structure. That's a different question entirely, and most AI systems were never designed to answer it.

The demo works. Production doesn't.

A policyholder reaches out about a claim. A generic AI agent pulls the status and fires back a response. Ticket closed, metric logged. Looks great on the dashboard.

Now put a time-demand letter with a regulatory deadline behind that same claim. Suddenly the AI needs to recognize a compliance trigger, flag the severity, route to the right adjuster based on claim complexity and jurisdiction, and log the entire chain for audit. If the system treats every claim inquiry identically, what you actually have is a resolution rate that looks impressive sitting next to a governance posture that falls apart under examination.

The long tail is where it breaks

We've talked about this before through the lens of the CLEAR framework. Teams build for the happy path, validate with a quick demo, and ship it. Then the real world shows up. Coverage verification across policy types and jurisdictions. Subrogation identification. Fraud flag routing. Disclosure requirements that differ state by state. The demo that took thirty minutes to build becomes six months of engineering, and you're still finding edge cases a year into production.

Regulators get this. The proportionality principle isn't just a bureaucratic sorting mechanism. It reflects a real understanding that the complexity of insurance operations creates an inherently high-risk territory for automated decisions. A policyholder who gets wrong coverage information from an AI agent faces the same consequences as one who gets it from a human. Same regulatory standard. Same accountability.

What governance-ready AI actually looks like

The NAIC pilot makes the requirements concrete. And those requirements trace back to architectural decisions that were either made when the platform was built, or weren't.

Decision logic that a regulator can follow

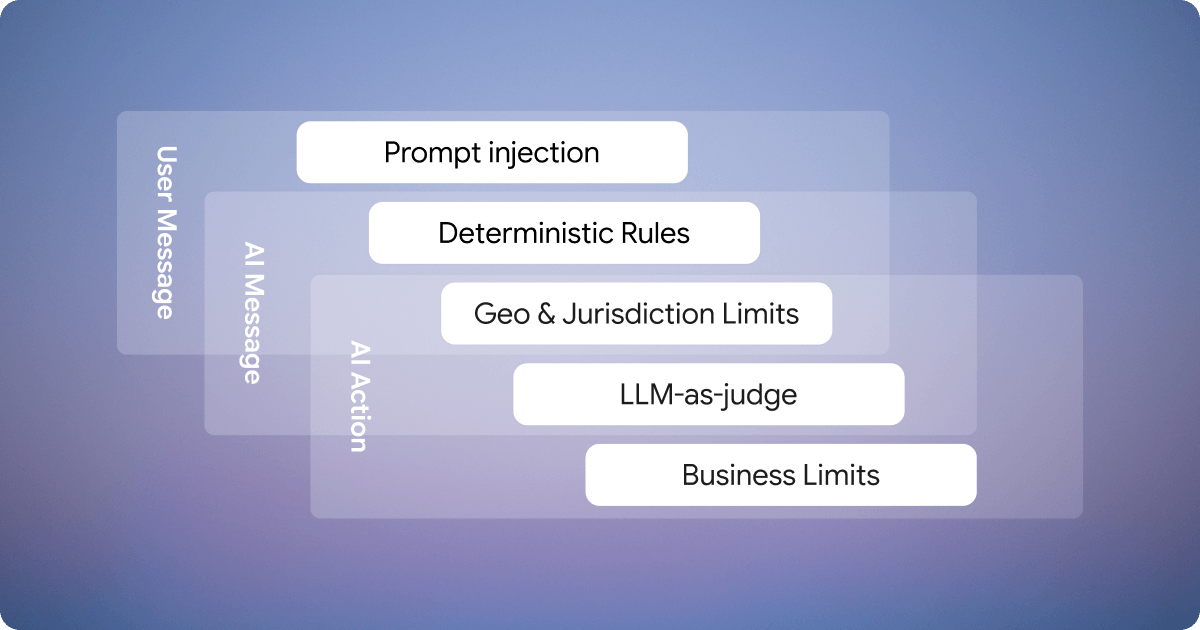

Probabilistic hand-waving doesn't satisfy an examiner. Regulators want to see which rules governed a decision, what data informed it, and the path from input to output. That demands a system where LLM reasoning is bounded by deterministic rules and structured workflows, not a system where the model reasons freely and the team hopes it stays within policy.

Audit trails that exist at execution time

Every interaction, every decision, every escalation needs logging with reasoning and source references. Not reconstructed after someone asks. Not summarized from embeddings. Captured in real time, in a format that works for claims review, QA cycles, and regulatory audits. When a regulator points at a specific policyholder interaction and asks what happened, the answer has to be precise and immediate.

Workflows that respect jurisdictional complexity

Insurance isn't one workflow. It's dozens, maybe hundreds, that vary by line of business, jurisdiction, policy type, and customer tier. A first-party property claim in Florida requires different escalation paths than a commercial liability claim in Pennsylvania. Different disclosures. Different approval chains. If your AI treats these identically, you've built a compliance problem that compounds with every ticket resolved.

Escalation boundaries defined by policy

Not everything should be resolved autonomously. The boundaries for human handoff need to come from business rules and regulatory requirements, not from a confidence score threshold. Certain disclosures must be delivered in full. Certain decisions require human judgment. Certain triggers demand immediate escalation to specialized teams. The system needs to enforce those rules at volume, consistently, without exception.

Security that's structural

Policyholder data, claims details, and coverage information all fall under regulated data categories. Encrypted integrations, data residency controls, and certifications like SOC 2 Type II and ISO 27001 are table stakes. If your AI vendor treats these as premium add-ons, that tells you something about how the platform was designed.

Where the industry is going next: from governance to evaluation

The NAIC framework does something important beyond regulatory oversight. It establishes the first real signal that AI systems in insurance will be evaluated against consistent standards.

What it doesn’t yet provide is a way for insurers to proactively test their systems before a regulator does.

Today, most carriers have no clear way to answer questions like:

- Does our AI behave consistently across edge cases?

- Where does it fail under regulatory pressure?

- Can it reliably escalate when required by policy?

- How does it perform across jurisdictions and product lines?

That gap creates a new category: AI system evaluation and benchmarking for insurance.

The companies that move first won’t just pass regulatory reviews. They’ll define what “good” looks like.

How Notch is built for this

We didn't retrofit governance capabilities after the NAIC started asking questions. The platform was designed from the ground up for regulated industries where AI decisions carry real consequences. But governance alone isn’t enough. As regulators formalize how AI is assessed, insurers also need a way to evaluate their own systems before those exams happen. Notch is built not just to operate AI in production, but to make its behavior measurable, testable, and provable against emerging regulatory standards.

Governance lives in the execution layer

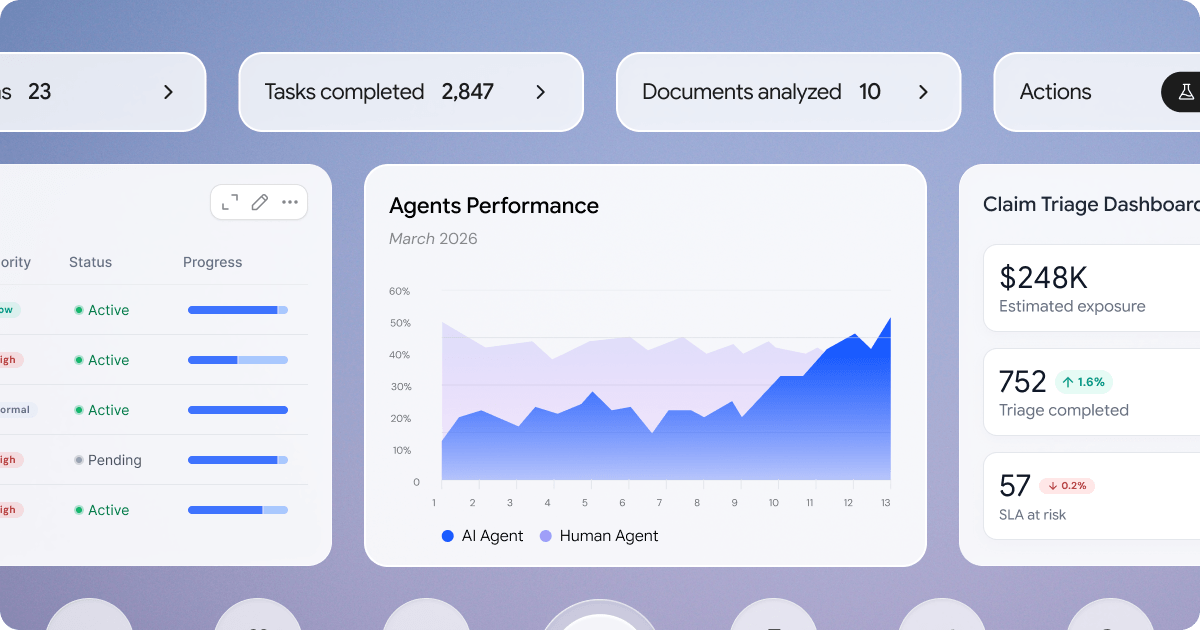

Notch doesn't bolt compliance onto a general-purpose AI engine. Over 40 specialized agents operate inside structured workflows where policies, escalation rules, and compliance constraints can be configured per line of business, geography, and customer segment. Governance isn't a reporting layer. It's the architecture.

Every action is traceable

When a regulator, or your own QA team, asks why the system took a specific action on a specific interaction, the answer is already there. Which data was used. Which rules applied. Which workflow governed the resolution. That's the kind of traceability that Exhibits B and C are designed to test, and it's been part of Notch since day one.

Production insurance workflows, not demo scenarios

Claims intake with severity prioritization and deadline detection. Policy servicing with endorsement processing, coverage clarification, and document fulfillment. Billing inquiries with payment routing and cancellation avoidance. These run in production today across insurance carriers, complete with the deterministic validation layers, configurable guardrails, and full audit trails that the NAIC framework evaluates.

We're SOC 2 Type II and ISO 27001 certified. Encrypted API integrations keep policyholder and claims data within your secure infrastructure. When regulators get to Exhibit D and start asking about data protection and integrity, the answers are already built into how Notch operates.

Notch as an evaluation layer for AI agents

The next phase of this market isn’t just better AI systems. It’s better ways to evaluate them.

Notch enables insurers to:

- Test agent behavior against real-world scenarios, not just happy paths

- Measure consistency across jurisdictions, products, and edge cases

- Validate escalation behavior against policy and regulatory requirements

- Inspect explainability at the level regulators expect

- Benchmark operational readiness before systems reach production

This shifts AI from something you deploy to something you can continuously evaluate and improve.

As regulatory frameworks like the NAIC tool evolve, the ability to demonstrate performance against clear standards becomes a competitive advantage. Notch is designed to make that measurable.

What to do between now and November

The pilot wraps in September. The tool gets refined in October. Adoption is expected in November. That's not next year's problem. It's this year's planning cycle.

Stress-test what you're running today

Can you produce a complete inventory of every AI system that touches a policyholder? Can you demonstrate governance that actually operates in production, not just in documentation? Can you pull the full decision trail for any given interaction and hand it to an examiner? Can you prove your data handling meets the standards the tool evaluates? If any of those answers involve qualifying language or workarounds, you know where the gaps are.

Challenge your vendors

If you're evaluating AI platforms right now, skip the demo metrics and go straight to the governance questions. Can this vendor produce a complete audit trail for any interaction? Can they show you decision logic, not just the output? What happens when the AI hits an edge case outside its training? How the vendor responds will tell you more about production readiness than any resolution rate in a pitch deck.

Move before formal adoption forces your hand

The pilot doesn't create new legal requirements. Regulators have been clear about that. But it establishes a structured framework for how AI governance will be assessed from here forward. Insurers who build governance infrastructure now end up with a competitive advantage: smoother regulatory interactions, stronger carrier relationships, and the ability to push AI into higher-complexity workflows without worrying about what the next exam turns up.

Companies that treat governance as a feature, not a constraint, will be the ones scaling autonomously. The rest will spend the next cycle walking examiners through systems they can't fully explain.

We built Notch so that conversation never has to happen. Talk to our team about how Notch maps to the NAIC AI Systems Evaluation framework.

Key Takeaways

- The NAIC's AI Systems Evaluation Tool pilot launched in March 2026 across twelve states, with insurers already receiving structured questionnaires from their domiciliary regulators.

- AI agents handling policyholder interactions like claims inquiries, endorsement processing, billing disputes, and certificate issuance face the deepest scrutiny.

- Systems built around containment rates and deflection metrics can resolve tickets at speed, but they often lack the deterministic decision logic, audit trails, and policy-governed workflows that regulators are now asking to see.

- The four exhibits in the evaluation tool probe whether AI governance is embedded in how a system actually operates, from decision logic and data lineage through to escalation boundaries and jurisdictional workflow variation.

- The pilot wraps in September, the tool gets refined through October, and formal adoption is targeted for the NAIC fall meeting in November 2026.

Got Questions? We’ve Got Answers

The NAIC AI Systems Evaluation Tool pilot runs from March through September 2026. Refinements are expected in October. Formal nationwide adoption is anticipated at the fall NAIC meeting in November 2026. That means you have roughly seven months before the evaluation methodology becomes the standard lens for regulatory examinations. If you're still running AI systems that lack traceability, jurisdictional workflow differentiation, or documented governance, now is the window to close those gaps.

The twelve participating states are California, Colorado, Connecticut, Florida, Iowa, Louisiana, Maryland, Pennsylvania, Rhode Island, Vermont, Virginia, and Wisconsin. Each state is selecting between one and more than ten domestic insurers across property/casualty, life, and health lines. Even if you're domiciled elsewhere, this matters. The pilot is building the evaluation methodology that regulators nationwide are expected to adopt after the November 2026 NAIC meeting. Waiting until your state formally adopts the tool means you're scrambling to respond rather than operating from a position of readiness.

Regulatory liability for AI decisions stays with you as the insurer, regardless of who built the technology. The NAIC framework makes no distinction between internally developed AI and vendor-purchased systems. Your vendor's compliance posture, their certifications, their internal testing: none of that transfers your accountability. If a regulator examines how your AI handled a coverage inquiry or claims decision, the question lands on your desk, not your vendor's.

Not directly. The NAIC has stated that the evaluation tool doesn't establish new legal obligations or suggest that any particular governance framework is inadequate. But it does create a structured method for regulators to assess AI governance, and responses could influence how regulators evaluate an insurer's risk profile going forward. The practical effect is that governance gaps identified during the pilot may attract closer regulatory attention in future examination cycles.

The cost of waiting isn't just regulatory. Carriers that delay AI governance work until formal adoption are stacking up three different types of exposure simultaneously. First, there's the operational scramble: building traceability, audit infrastructure, and jurisdictional workflow logic under time pressure costs significantly more than building it deliberately. Second, there's the competitive disadvantage: carriers with governance infrastructure already in place will be able to push AI into higher-complexity workflows while their peers are still trying to prove their existing deployments are defensible. Third, there's the relationship risk: regulators remember which carriers engage proactively and which ones show up reactive and unprepared.

Autonomous AI for operations leaders ready to turn complexity into advantage.

Deployed in weeks. Autonomous in months. Compounding for years.

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.jpg)

.png)

.jpg)