Engineering Personalized Trust

.png)

Stay ahead in support AI

Get our newest articles and field notes on autonomous support.

AI is easy to demo. Trust is hard to build.

Anyone today can spin up an LLM and make it answer questions. Very few can deploy AI that securely cancels an insurance policy, updates sensitive customer data, or triggers regulated workflows inside enterprise systems while maintaining compliance, security, and user trust.

In regulated industries, every AI action carries compliance, financial, and legal consequences. The bar isn't "does it work" - it's "can you prove it worked correctly, to a regulator, six months from now."

At Notch, that's exactly what we build. Notch is the AI Operating System for Regulated Industries - we deploy AI agents that resolve operational workflows end-to-end for insurance carriers and financial services firms, backed by $30M in Series A funding from Headline, Lightspeed Venture Partners, and others.

We build AI agents that don't just talk - they authenticate, integrate, and act inside regulated environments. And we deploy them in weeks, not after quarters of integration work.

This is what that actually means.

Personalization Is Infrastructure, Not Prompting

When people hear "personalized AI," they often think about tone, brand voice, or prompt engineering. That's the easy part.

Real personalization, especially in regulated industries like insurance, fintech, and healthcare, means adapting to each organization's infrastructure and operational reality. That includes different authentication flows, identity providers, compliance rules, ticketing systems, back-office architectures, and risk tolerances. No two brands operate the same way

Our job isn't tweaking prompts. Our job is embedding AI directly into mission-critical infrastructure, securely and reliably, in a way that feels native to each organization. That applies to both sides of the platform: conversational workflows where AI agents interact with policyholders and brokers, and back-office workflows where the system ingests documents, extracts structured data, classifies and routes submissions, and executes operational tasks behind the scenes.

That's engineering work.

Example #1: OTP-Based Authentication in a Policy Cancellation Flow

Let's take a real scenario.

A customer wants to cancel their insurance policy. Before we can execute that action, we must verify their identity. No shortcuts. No assumptions.

The challenge is not just technical - it's experiential. The AI agent is handling the conversation, the user is requesting a sensitive action, and the system must authenticate them before proceeding. At the same time, the experience has to feel seamless rather than forcing the customer to bounce between disconnected systems.

For this client, authentication was OTP-based. When the AI detected a sensitive intent such as policy cancellation, it triggered OTP generation through the client's backend. The one-time password was then sent to the customer through an approved channel. The customer entered the OTP directly in the conversation, and the AI validated it against a secure backend service. Once validation succeeded, the session state was marked as authenticated, scoped permissions were granted, and the cancellation workflow could continue - all within a single conversational experience.

What makes this interesting from an engineering standpoint is that it goes far beyond simply sending a code and comparing strings. We had to manage secure conversational state, handle OTP expiration and retry logic, protect against brute-force attempts, prevent sensitive data leakage, and ensure compliance logging throughout the process. Just as importantly, all of this had to work within a chat-driven user experience that still felt natural and frictionless.

And we implemented it as part of a production deployment within days.

The AI didn't just respond. It securely executed an action on behalf of a verified customer.

.png)

Example #2: Enterprise Auth0 Integration for a Large Insurance Company

Now let's look at a more complex case.

A large insurance enterprise had strict identity and access management requirements and was already using Auth0 as its identity provider. Replacing that system was not an option, so we embedded ourselves into it.

This environment came with all the complexity you would expect from enterprise IAM: token-based authentication, strict session validation rules, and a zero-trust security posture.

We implemented a secure token-based flow using Auth0. When the AI detected a sensitive action request, the user was prompted through a secure Auth0 authentication flow. Auth0 then issued a scoped access token, which was securely passed into our integration layer and validated before anything else happened. Backend services verified the token's signature, checked expiration, and confirmed the relevant scope permissions. Only after all of those checks passed did the AI execute the requested action.

The complexity here wasn't just in making Auth0 work. It was in handling token lifecycle management, secure storage, session continuity across conversational boundaries, enterprise-scale error handling, workflow-specific permission scoping, and strict audit logging.

We didn't replace their identity layer. We adapted to it.

That's what real AI integration looks like in regulated environments.

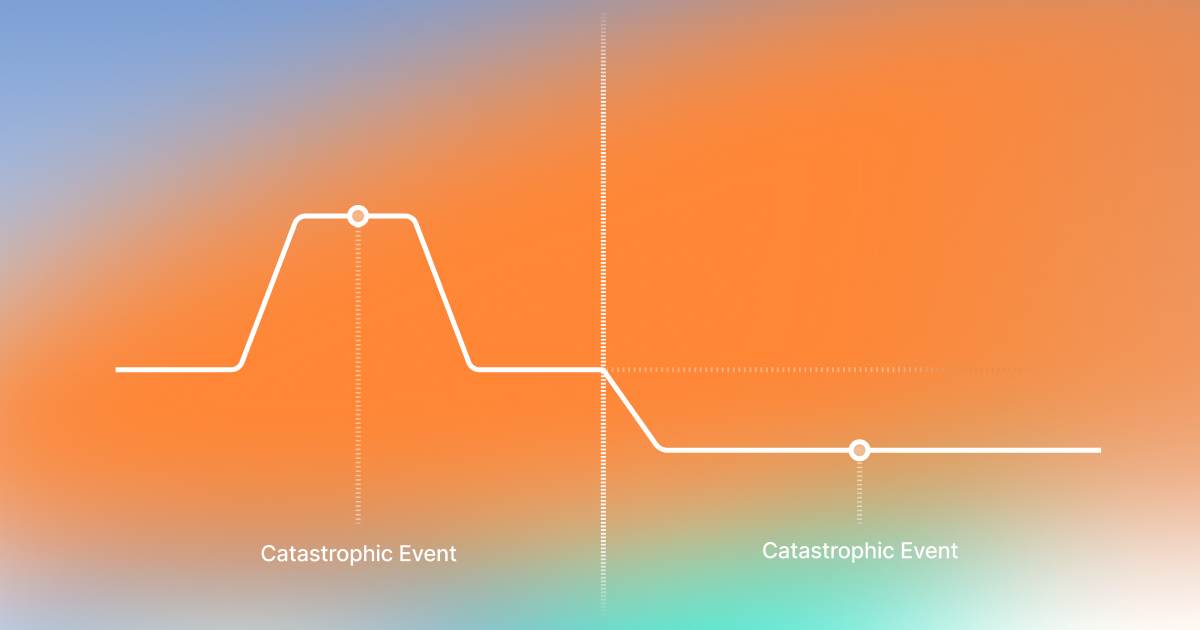

The Architecture Behind It

At a high level, our system spans several layers. It begins with the customer interface, whether that's chat, email, or voice. From there, requests flow into the Notch AI agent layer, where intent detection, orchestration, authentication, workflow execution, and state management all take place.

Behind that sits the integration layer, which connects the AI to ticketing systems, CRM platforms, policy management systems, and identity providers such as Auth0 or OTP services. Finally, everything reaches the client's back-office systems, where the actual business actions happen.

Every sensitive action moves through the same core controls. At Notch, these are formalized as our five-layer guardrail architecture: LLM-as-judge guardrails for conversation safety and policy enforcement, technical defenses against prompt injection and adversarial misuse, deterministic access rules tied to verified identity and role, hard business limits with deterministic thresholds for high-risk actions, and jurisdiction-aware rules that adapt to applicable regulatory regimes. For a deeper look at how this works, see our technical breakdown of guardrails and escalations for AI agents in regulated industries.

The AI never operates in isolation. It operates inside a secure, modular system designed for extensibility and compliance.

And that architecture must adapt for every client.

What Delivery Engineering Looks Like at Notch

This isn't prompt engineering. It's delivery engineering.

A typical week might include designing a secure conversational flow for a sensitive action like policy cancellation or claims intake, integrating with a client's production CRM or policy engine, implementing authentication through OAuth, OTP, or Auth0, debugging a token lifecycle issue across conversational session boundaries, and making sure compliance logging is airtight before a deployment goes live.

The work is backend and systems engineering applied to AI. It requires working directly with security constraints, identity and access management, observability, logging, and reliability under real user traffic. And unlike internal demos, the systems you build are handling real customer interactions in production from day one.

You ship enterprise-grade deployments in weeks, not prototypes in isolation.

Ownership in Regulated Environments

When an AI agent cancels a policy, it matters. When authentication fails, it matters. When tokens are mishandled, it matters.

We operate in environments where trust is not optional. Every interaction may involve sensitive customer data, regulated workflows, and actions that directly affect a user's account or financial state. That creates a different kind of engineering culture.

In regulated environments, building AI agents means constantly thinking about reliability, security, and accountability. It means thinking in threat models before writing the first line of code, considering how authentication, permissions, and integrations could fail or be exploited. It means designing for failure states so that when authentication breaks, tokens expire, or backend systems become unavailable, the system fails safely and predictably.

It also means maintaining full auditability, with authentication events, workflow actions, and system decisions logged so that every sensitive action can be traced and verified. It means handling identity and access management correctly by validating tokens, enforcing scopes, and ensuring permissions are respected across every workflow the AI touches. And it means building systems that protect customer data while still enabling conversational AI to take meaningful action.

Most importantly, it means moving fast without compromising safety - shipping production deployments quickly while maintaining strict compliance, security, and reliability standards.

Engineers here do not just write features. They build systems that customers rely on for real decisions.

Building Personalized Trust at Scale

AI in regulated industries isn't about replacing humans. It's about embedding intelligence into secure, compliant workflows that already power critical systems.

We've processed over 20 million conversations in production across regulated environments. The next chapter is scaling this across the US insurance and financial services market, with deeper workflow coverage and more sophisticated orchestration across multi-agent pipelines. The engineering problems only get harder from here.

If you're excited about building AI systems that act, not just answer - working at the intersection of AI, security, and infrastructure in production - we're hiring across engineering. See open roles at notch.cx/careers.

Key Takeaways

Got Questions? We’ve Got Answers

Autonomous AI for operations leaders ready to turn complexity into advantage.

Deployed in weeks. Autonomous in months. Compounding for years.

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.jpg)

.png)

.jpg)