AI Chatbot vs. AI Agent: Customer Support Guide

Stay ahead in support AI

Get our newest articles and field notes on autonomous support.

AI Chatbot vs. AI Customer Service Agent: What's the Difference?

The terms AI chatbot and AI customer service get used interchangeably in vendor decks and procurement conversations. That confusion costs operations teams a lot of money. Chatbots and AI customer service agents are not variations of the same technology. These systems are built for different purposes. The question this article answers is not which one sounds better. It's which one your operation actually needs.

What Is a Chatbot?

A chatbot is a rule-based or scripted tool responding to user inputs through predefined decision trees or keyword matching. It is reactive and narrow by design, built to handle a fixed set of queries within a fixed set of parameters. When an interaction falls outside those parameters, a chatbot fails, redirects, or loops.

The critical thing to understand about chatbots is that they match context rather than reasoning true intent. A user input triggers a predefined output, which works when the problem is small and stable. There is no memory across sessions, limited context within them, and no capacity to adapt when a customer's question doesn't fit the flow. Most chatbot failures aren’t technical, they’re failures of scope. The system was asked to do something it wasn't built for, and no one caught the gap before it went live.

What Is an AI Customer Service Agent?

An AI agent is an autonomous system that uses reasoning to solve problems. It can navigate multiple steps, access your internal tools, and handle complex requests without following a rigid script. The distinction from a chatbot is architectural. An AI agent doesn't follow a decision tree. It interprets intent, builds a resolution path, and executes it.

Where a chatbot matches input to output, an AI agent understands what the customer needs, not just what they literally typed. It integrates with backend systems, including CRMs, policy databases, and order management platforms, to take action rather than provide information. Context is retained across a conversation and, in more advanced implementations, across sessions. In insurance customer support, this translates to an agent that verifies coverage, checks claim status, initiates a document request, and confirms next steps in a single interaction, without human intervention.

Key Differences Between Chatbots and AI Customer Service Agents

Pointing out one key distinction between chatbots and AI agents is impossible. The whole difference comes from the architectural structure of decisions that extends to every layer that makes both technologies functional - from logic and task execution, to context and adaptability. Let’s take a look at the difference breakdown:

The task execution dimension is what matters most for operations leaders. A chatbot that correctly identifies a customer's intent but doesn’t act on it has not resolved anything. That is the gap separating support metrics that look good in a dashboard from support operations that actually work. Resolution requires action in a connected system. Answering a question about a billing dispute is not the same as resolving one.

Use Cases: When to Use Each

The choice between implementing an AI chatbot or an AI customer support agent depends on the complexity of the problems the insurance company resolves. Chatbots have legitimate use cases, and pretending otherwise produces an unconvincing argument.

Chatbot Use Cases

Chatbots are a reliable solution for high-volume, low-complexity queries where the answer is always the same. FAQs, store hours, basic policy information, password resets, and initial contact triage all fit this profile. Chatbots also work well as the first filter in a support flow, collecting information, confirming identity, and routing to the appropriate team, as long as they are not expected to go further than that. The failure point arrives when organisations deploy chatbots against complex workflows and measure the resulting deflection numbers as though deflection and resolution are equivalent.

AI Agent Use Cases

AI agents thrive in complex, multi-step workflows where context is everything. Whether it’s verifying coverage for a new claim, pulling policy data for an endorsement, or resolving a billing dispute, the agent reasons through the history and data to find the right outcome without human intervention. In insurance, Notch's agents handle policy servicing requests, COI issuance, claim status inquiries, and time-demand letter prioritisation, with system-level execution behind each interaction. AI agents are designed for scenarios where customers need tasks completed rather than questions answered.

How to Choose Between a Chatbot and an AI Agent

Before deciding whether a chatbot or an AI agent works better, assess the workflows. Leaders who choose their AI before mapping their support flows usually end up with a tool they can't use. They buy a chatbot when they need an agent, or they deploy an agent that has no way to access the data it needs to actually solve problems.

The diagnostic questions that cut through the noise are straightforward, but the answers often reveal deep operational gaps. What percentage of inbound contacts require action in a connected system to resolve? How often do customer issues span more than two steps to close? What does the current escalation rate reveal about where scripted flows break down? Is the operation measuring containment or resolution, because those are not the same number? In regulated environments such as insurance, finance, and healthcare, does the current tool maintain a full audit trail of every action taken on behalf of a customer?

If the answers point toward complexity, context, and action, the requirement is an agent. A chatbot will reduce handle volume on straightforward queries. It will not move a resolution rate.

How AI Agents Improve Customer Experience

The CX impact of moving from reactive, scripted support to autonomous resolution goes beyond speed. Vendor presentations focus on first response time. Resolution quality is what determines whether customers return.

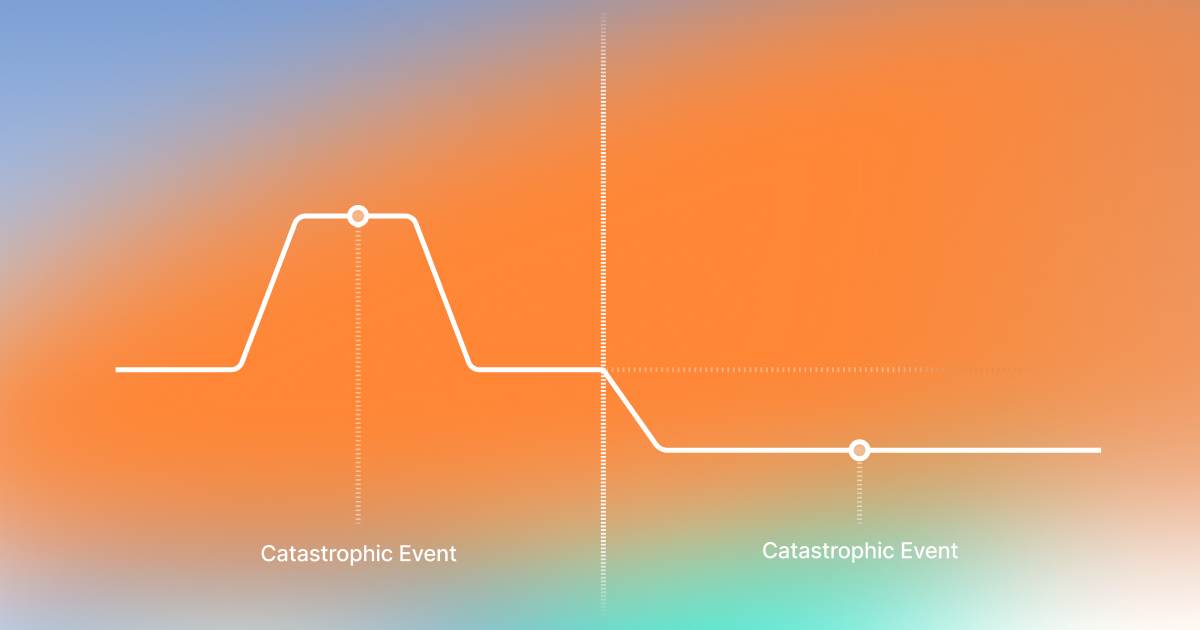

Context retention across interactions enables personalisation that scripted flows cannot approximate. Consistency across every channel eliminates the service gaps that occur when a customer’s experience depends on which agent or system handles their request. Proactive support changes everything. In eCommerce customer support, an automated delivery update that prevents a ticket is worth far more than a chatbot answering 'Where is my order' in under ten seconds. In insurance, proactive claim updates during a catastrophe cut inbound volume and protect policyholder trust precisely when it is most at risk.

How AI Agents and Human Agents Work Together

AI and human agents should work as a coordinated unit, opposite to the belief they will replace each other. The AI agents resolve routine tasks and gather data, so when a complex case escalates, the human agent receives a full context. In insurance, this collaboration requires redefining team roles and responsibilities, while being aware of chatbot and AI agent limitations.

Redefining Team Roles and Responsibilities

Human agents in an AI-led operation shift from frontline ticket handling toward coaching, oversight, exception handling, and knowledge management. In practice, this means the work becomes harder and more valuable. Escalated cases that reach a human are genuinely complex rather than misrouted. The human role is to own the edge cases, approve the actions that require judgment, and continuously improve the system's knowledge base. AI agents do not make adjudication or legal decisions. They execute structured workflows with deterministic validation, and human agents remain in the loop for the decisions that require them, while AI absorbs everything that doesn't.

Challenges and Limitations of Each Approach

Leaders who were burned by basic chatbots need to know that today’s AI recognizes its own limits, rather than just promising the world.

Chatbot Limitations

Brittle decision trees break at edge cases and require manual reconfiguration every time a product, policy, or workflow changes. Ambiguous or multi-intent queries produce loops or failures. The deeper problem is structural: chatbots optimised for containment metrics generate deflection numbers that look acceptable in dashboards while customer frustration accumulates underneath. The metric and the outcome point in opposite directions.

AI Agent Limitations

Knowledge base readiness is the most common failure point in production. An agent is only as good as the information it can access. Organisations that deploy agents on poorly structured or incomplete knowledge bases produce confident-sounding, wrong answers. Implementation complexity is real: backend integrations, guardrail configuration, and QA take time and expertise that generic deployments underestimate. True ROI means moving past speed and containment toward resolution quality and accuracy. In regulated sectors, it also requires a level of transparency and auditability that most AI platforms are not designed to provide.

The Vending Machine vs. The Personal Chef

A chatbot is a vending machine: fast, reliable within its range, and completely inflexible outside it. It delivers what's in the machine. If what the customer needs isn't stocked, the transaction fails. An AI agent is a personal chef: it reads the situation, works with what's available, adapts when the brief changes, and delivers something that actually fits the need. Both have genuine value. The operational mistake is using a vending machine when the customer sat down expecting a chef.

How Notch Approaches AI Customer Service

Notch is built on the foundational premise that runs through this entire article: deflection is not resolution. The platform combines AI-agentic architecture with rule-based systems and configurable guardrails to resolve complex support interactions across any channel, including email, chat, social, SMS, and voice, in 75+ languages.

On the conversational side, Notch agents handle policy servicing, billing disputes, FNOL intake, COI issuance, endorsement changes, and broker inquiries with system-level execution. On the back-office side, intelligent document ingestion, time-demand letter prioritisation, and coverage signal extraction run automatically with a full audit trail. For regulated industries, the guardrail and traceability layer matter as much as the resolution capability, because automation that introduces compliance risk is not a net improvement. Notch agents execute structured workflows with deterministic validation, and humans remain in the loop for the decisions that genuinely require judgment.

What the Decision Actually Comes Down To

The chatbot versus AI agent comparison is a question about what a support operation is expected to accomplish. If the goal is to answer common questions faster and filter inbound volume, a chatbot is a reasonable tool with a clearly bounded application. If the goal is to resolve customer problems end-to-end, at scale, with consistency, and in environments where compliance and audit trails are non-negotiable, the architecture required is different.

Most chatbot deployments over the last five years didn’t fail because of the technology. They failed because they asked a matching system to reason, then measured success by deflection rather than resolution. Getting that distinction right before deployment is the decision that determines whether an AI investment improves the operation or just gives the same problems a new interface.

Key Takeaways

Chatbots and AI customer service agents are not variations of the same tool. They are built on different architectures, designed for different problems, and measured by different outcomes.

A chatbot that correctly identifies what a customer needs but cannot act on it has resolved nothing.

A customer who was sent a link and a customer whose problem was closed are counted identically in containment metrics. Only one of them is satisfied.

AI agents do not eliminate the need for human judgment. They concentrate human attention on the interactions where judgment genuinely changes the outcome.

An agent is only as capable as the information it can access. Deploying one on poorly structured or incomplete data produces confident-sounding, wrong answers, which is worse than no answer at all.

Got Questions? We’ve Got Answers

A chatbot is a rule-based tool that matches user inputs to predefined outputs. It follows a decision tree, handles a fixed set of queries, and fails or loops when something falls outside those parameters.

An AI customer service agent operates differently at an architectural level. It reasons through what a customer needs, builds a path to resolution, integrates with backend systems to take action, and retains context across a conversation.

The chatbot answers. The agent resolves. That distinction sounds simple, but it is the entire difference between a support operation that closes cases and one that generates callbacks.

Chatbots are a legitimate tool for high-volume, low-complexity queries where the answer is always the same regardless of the customer's history, account status, or plan details. FAQs, store hours, basic policy information, password resets, and initial routing all fit that profile.

The failure point arrives when an organization deploys a chatbot against complex workflows and then measures deflection as though deflection and resolution are equivalent. A chatbot placed correctly in a support flow reduces agent load on the simple end. It will not move a resolution rate.

Older automation, whether rule-based bots, IVR systems, or RPA, operated in rigid silos. Each tool handled a specific task in a specific system and produced a human handoff the moment anything fell outside its defined scope. AI agents operate across the full workflow.

They interpret intent from natural language, access policy data, billing records, and claims history simultaneously, and execute transactions end-to-end without requiring a human to complete the action. The architectural shift is the point: not a faster version of the old tool, but a fundamentally different approach to what resolution means.

Map the workflows before selecting the tool. Leaders who choose their AI system first and map their support flows second tend to end up with either a chatbot deployed against problems too complex for it to handle, or an agent that cannot access the data it needs to actually solve anything.

The diagnostic questions that matter are: what share of inbound contacts require action in a connected system to close, how often do issues span more than two steps, and is the operation measuring containment or resolution? If the answers point toward complexity and action, the requirement is an agent.

The productive model is not AI replacing humans. It is AI absorbing the structured, repeatable layer of support so that human judgment is concentrated on interactions where it actually changes the outcome. In practice, that means escalated cases arriving at a human agent with full context already assembled, rather than starting from scratch. Human roles shift toward coaching, exception handling, oversight, and knowledge management.

The work becomes harder and more valuable. AI agents execute structured workflows with deterministic validation and do not make adjudication or legal decisions. Humans remain responsible for the cases that genuinely require them.

Autonomous AI support agent for Execs ready to turn the CS grind into a competitive edge.

30% of tickets autonomously resolved within 90 days.

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.jpg)

.png)

.jpg)