How to Write RFI Questions That Reveal AI Support Vendor Capability

Stay ahead in support AI

Get our newest articles and field notes on autonomous support.

You send out an RFI to six vendors and get back six responses that read almost identically. "Yes, we support omnichannel." "Yes, we offer GenAI capabilities." "Yes, we integrate with your systems." The decks blur together, and finding meaningful differences becomes an exercise in reading between the lines rather than comparing clear capabilities.

The challenge isn't your procurement process. AI customer support operates differently than traditional software categories, and RFI templates designed for helpdesk platforms often screen out the vendors most likely to deliver real operational impact. Most templates were built for Zendesk, Salesforce Service Cloud, Freshdesk. They assume you're buying a platform to host your support operations. If you're evaluating an AI agent layer that automates workflows on top of existing systems, those templates will reward vendors selling yesterday's architecture with a fresh coat of GenAI paint.

What follows is a framework built from real procurement cycles, refined based on which answers revealed genuine capability versus rehearsed marketing.

Channels and Case Handling

Customers reach out through whatever channel they prefer and expect continuity regardless of how they contact you. But the question isn't whether the platform unifies these touchpoints. It's whether AI can maintain context and resolve issues across them without human intervention.

The standard question asks whether agents see unified conversation history. That's a helpdesk question. You're asking where humans work. For AI agent evaluation, the question is whether the AI itself can track context across touchpoints and resolve issues without human involvement.

"Do you provide native omnichannel support? List all channels supported natively including email, web chat, in-app chat, social, SMS, and voice."

Native support differs from integration-dependent support in reliability and feature consistency. A channel bolted on through a third party often lags behind or breaks when updates roll out. Getting the full list tells you whether you're looking at a unified platform or a patchwork of integrations marketed as omnichannel.

"When a customer's issue spans multiple channels, how does the AI preserve context and intent across touchpoints without human intervention?"

Someone emails Monday about a billing issue, calls Wednesday for a status update, opens chat Friday because they're frustrated. Can the AI recognize this as one continuous problem? Vendors who've built for autonomous resolution answer with architectural specifics. Those who've bolted AI onto agent-assist tools pivot toward talking about their unified agent workspace instead.

"Does the AI resolve the issue or hand it off? Describe what complete autonomous resolution looks like end-to-end."

Force vendors to show their work. Not deflected to self-service. Not escalated to a human. Not closed because the customer stopped responding. Resolved. The description tells you whether they've built for resolution or containment metrics.

AI Capabilities

Here's where you separate real platforms from chatbots wearing enterprise packaging. These questions demand numbers from production, not theoretical capability from slide decks.

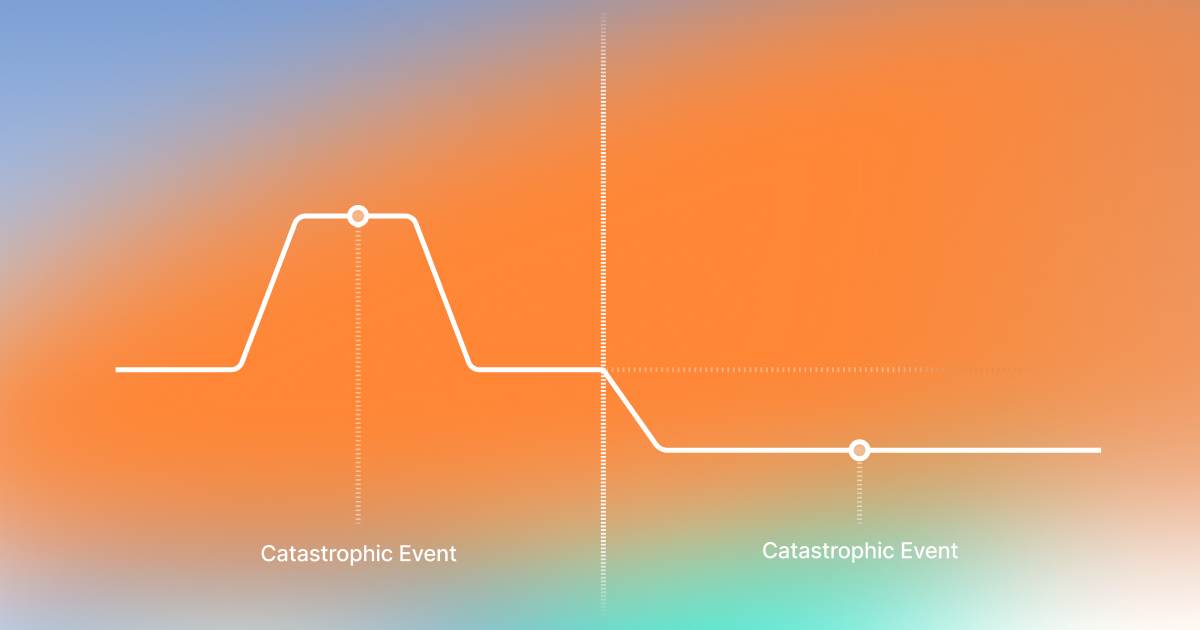

"What is the highest autonomous resolution rate achieved by a single customer in production? Define how you measure resolution vs. deflection."

The definition matters more than the number. A deflected ticket is one where the customer gave up or got pushed toward documentation they'd already tried. A resolved ticket means their problem got solved. Vendors conflate these constantly. This question forces them to show how they count.

"What was the largest percentage reduction in agent-handled volume achieved by a customer after deploying your AI? Provide timeframe and context."

A vendor claiming 70% automation that delivers 15% reduction in agent workload has built something impressive for demos and useless for operations. Did that reduction happen over 90 days in a managed pilot, or does it reflect steady-state performance across a full year?

"Do you provide GenAI agent-assist capabilities such as drafting, summarization, suggested replies, and intent detection? List features."

Capability lists reveal less than adoption metrics. How often do agents accept those suggestions? What's the accuracy rate on intent detection? A feature nobody uses isn't really a feature.

"Can GenAI be configured as first-line support for email cases? Describe supported workflows and controls."

Email carries high volume and high complexity simultaneously. The controls matter as much as the capability. Can you tune how aggressive the AI behaves? You need to configure this system to your risk tolerance, not accept whatever defaults shipped.

"Do you offer Quality Assurance capabilities for AI interactions natively, including review, sampling, scoring, and coaching? If not native, list preferred partner integrations."

QA gets overlooked until something goes wrong publicly. Someone needs to watch what the AI does when edge cases emerge. This question establishes who that someone is and what tools they'll have.

AI Architecture and Governance

Architecture questions sound technical, but they expose whether AI capabilities have substance or exist only at the surface level.

"Is your AI a single large language model or a multi-agent system that decomposes complex tasks into specialized sub-tasks? How does this affect accuracy on edge cases?"

Single-model architectures handle simple queries well but degrade on complex workflows. A customer asking something requiring information from three systems, ambiguous intent interpretation, and multi-step resolution will expose the difference. Not every vendor has built multi-agent systems, and those who haven't will tell you their single model handles everything fine. Push back.

"How does accuracy change as query complexity or document length increases?"

"What's my order status" gets answered correctly by nearly everyone. That's not where differentiation happens. Ask about queries requiring synthesis across long documents or navigating ambiguous intent. Vendors who've measured this share the data. Those who haven't probably won't like what they'd find.

"Is GenAI functionality like summaries, tone checks, and drafting native to your platform or delivered via third-party bolt-on APIs?"

Native versus bolt-on affects more than performance. Compliance controls extending through the entire stack behave differently than controls stopping at integration boundaries. When something goes wrong, native architecture gives you one vendor to hold accountable.

"Do you use foundation models from third-party AI labs such as OpenAI, Anthropic, Google, or open source? List providers and explain how easy it is to switch between them."

Multiple providers might indicate flexibility or architectural fragmentation. The list opens conversation about why they chose certain providers and how they manage multi-model complexity.

"Do you have controls to monitor and mitigate GenAI-specific risks like prompt injection, data leakage, and unsafe outputs?"

There's a critical distinction between controls at the architecture level versus controls through prompting. Prompt-level guardrails can be worked around by anyone who knows what they're doing. Architecture-level controls physically require human intervention for certain actions. One protects you. The other mostly protects vendor marketing claims.

Workflow, Routing, and Orchestration

Traditional helpdesks ask about visual workflow builders. You drag boxes, draw arrows, manually encode every decision path. That's the old model.

AI systems that reduce operational burden learn from existing documentation. Your SOPs, macros, historical tickets. They capture implicit knowledge without requiring someone to flowchart every scenario.

"How does the AI learn new workflows? Does it require manual configuration or can it ingest existing documentation like SOPs, macros, and historical tickets?"

The training process description reveals what you're buying. Software you'll spend months configuring? Or a system that adapts to how you already work?

"How long does it take from signing a contract to first autonomous resolution in production?"

Some vendors need months of configuration. Others observe your operations and start resolving tickets within weeks. Vague answers suggest the vendor hasn't done this enough times to know their own deployment timeline.

"Can the platform trigger actions in backend systems through APIs and webhooks without agents leaving the workspace? Provide examples."

If a vendor struggles to provide examples, their integration depth may be shallower than marketing suggests.

Performance Measurement

Standard analytics show resolution rates and handle times. Those aggregate numbers hide everything you need to know about where the system struggles.

"How do you define and measure genuine resolution? How is that different from deflection, containment, or closure?"

If the vendor counts a ticket as resolved when the customer stops responding, their resolution rate looks better than reality. The definition reveals what they're optimizing for.

"Do you provide analytics showing where the AI is failing? Not just overall resolution rate, but which workflow branches or case types it can't handle?"

A 75% resolution rate could mean uniform performance across case types, or 95% on simple cases and 20% on complex ones. Vendors who surface failure patterns build systems that improve. Those showing only aggregate metrics build systems that plateau.

Knowledge Management and Continuous Learning

Forget questions about knowledge authoring tools and approval workflows. Those are for full-suite CX platforms. For AI evaluation: does the system stay current, and does it learn from mistakes?

"How does the AI stay current when your knowledge base or policies change? Automatic or manual retraining?"

If every change requires manual retraining, you'll perpetually run on outdated information. Automatic incorporation keeps you current without maintenance cycles that never quite happen on schedule.

"If an agent corrects an AI response, how does that feedback improve future behavior? How quickly does the model adapt?"

An agent fixing an AI response has identified exactly where the system failed. Vendors who capture corrections build systems that improve. Those who don't build systems that repeat the same mistakes.

Migration and Onboarding

For AI vendors, migration isn't primarily about data portability. It's how quickly the AI gets smart enough to handle real traffic.

"What inputs does the AI require to reach production-level performance? List required assets and minimum viable dataset sizes."

Understanding what's needed upfront prevents discovering three months into implementation that you need documentation that doesn't exist yet.

"Describe your shadow mode or dry-run capability. Can the AI observe live operations and suggest responses before going fully autonomous?"

Shadow mode lets you evaluate performance on real traffic without customer exposure. Vendors offering this have thought about deployment risk. Those expecting you to trust their system sight unseen on day one have thought primarily about closing the deal.

"Can you ingest legacy case data via JSON or CSV, including attachments and custom fields?"

Format constraints determine how much transformation work falls on your team.

Commercial Model and Accountability

Pricing structure reveals incentive alignment more clearly than any capability discussion.

"Do you offer performance-based pricing tied to resolution outcomes? Describe your POC structure, success criteria, and how cost scales with demonstrated value."

Vendors pricing on resolution outcomes succeed only when you succeed. Vendors pricing on seats succeed regardless of whether problems get solved. Confident vendors absorb more risk during POC.

"What is your team's ongoing involvement after go-live? How many people on your side support a typical enterprise deployment?"

Software vendors hand you credentials and documentation. Managed service providers maintain involvement through optimization. The staffing model tells you whether you're buying a tool or a partnership.

"Is pricing linear or usage-based with variables like token consumption and automation volume?"

Variable fees surprise you when volume spikes. Understanding all components lets you model costs across scenarios.

Security and Compliance

"Which security certifications are valid and what's their scope? SOC 2 Type II, ISO 27001, HIPAA?"

SOC 2 covering the core platform differs from SOC 2 covering only certain modules. HIPAA compliance with a signed BAA differs from HIPAA "readiness" that means nothing contractually.

"Can data be stored in specific regions to meet GDPR requirements? List supported regions and limitations."

Some vendors store certain data types centrally regardless of what your contract says. Limitations matter as much as capabilities.

"Do you support PII masking in the UI and at the data layer? Explain role-based access and configurability."

Different team members need different visibility. Role-based controls at both layers provide defense in depth.

Scalability

Scale questions should address your worst days, not average ones.

"Do you have proven experience handling large volume spikes, such as 150k or more cases within 24 hours? Provide examples."

Black Friday for ecommerce. Weather events for insurance. Product launches for SaaS. Examples from real deployments matter more than load test results under ideal conditions.

"Do you provide a staging environment that mirrors production?"

Parity gaps between staging and production cause surprises at the worst times.

Customer Base and Industry Coverage

"Do you have customers in our industry? Provide the number and example use cases."

A vendor deployed in insurance twenty times has encountered edge cases and regulatory requirements that a first-timer hasn't.

Choosing a Partner Built for Outcomes

The questions throughout this guide expose one divide: platforms optimizing for activity metrics versus platforms accountable for outcomes.

Deflection rates, ticket closure counts, and conversation volumes look impressive on dashboards without proving customers got what they came for. The vendors worth serious evaluation tell you exactly how many issues were genuinely resolved and exactly where the AI fell short.

Notch is built around that distinction. The platform uses multi-agent architecture that decomposes complex workflows into specialized sub-tasks, maintaining accuracy where single-model systems struggle. It combines AI autonomy with deterministic validation and configurable guardrails, so automation depth doesn't require sacrificing compliance visibility. And it deploys with a dedicated managed service team, moving from signed contract to production-level resolution in weeks rather than quarters.

The questions that matter most force vendors to demonstrate rather than describe. Ask for production resolution rates, not feature lists. Ask how the AI handles what it doesn't know. Ask who's accountable after go-live.

The answers tell you which vendors have built something real.

Key Takeaways

RFI templates built for helpdesk platforms actively work against you when evaluating AI customer service software.

The definition of "resolved" matters more than any resolution rate figure.

Before comparing numbers across vendors, get each one to define exactly how they count, because the gap between a 75% "resolution rate" and 75% genuine resolution can represent an entirely different product.

Architecture questions sound technical but reveal whether AI capabilities are real or cosmetic.

Vendors pricing on resolved outcomes succeed only when you do. Vendors pricing on seats or conversation volume succeed regardless of whether customer problems actually get solved. Confident vendors absorb risk during proof of concept. Those who won't are telling you something.

Vendors who let you evaluate performance on real traffic before going fully autonomous have thought carefully about deployment risk. Those who expect you to go live on trust alone have thought primarily about closing the deal.

Got Questions? We’ve Got Answers

Traditional helpdesk evaluation focuses on where human agents work: ticket views, queue management, SLA dashboards, and workflow builders. AI customer service software evaluation requires a different frame entirely, because the system is not supporting agents, it is replacing a significant share of their work.

The questions that matter shift from "can agents see unified history" to "can the AI maintain context across touchpoints and resolve issues without human involvement." Vendors who have built genuine autonomous resolution answer those questions with production data and architectural specifics.

Those who have bolted AI onto agent-assist tools pivot toward talking about their unified agent workspace instead.

Get the definition before trusting the number. A vendor reporting 80% resolution may be counting tickets as resolved when the customer stops responding, when the session ends, or when the customer clicks a link regardless of whether it answered their question.

Genuine resolution means the customer's problem was closed because it was solved. Ask each vendor to walk through exactly what triggers a resolved status in their reporting.

Ask whether they can show you, separately, what percentage of contacts required no human involvement versus what percentage were deflected or transferred. Those are different numbers, and the gap between them is where the real performance picture lives.

Two questions cut through the most noise. First: is the system a single large language model or a multi-agent architecture that decomposes complex tasks into specialized sub-tasks?

Single-model systems handle straightforward queries reliably but degrade when a customer's issue requires pulling from multiple systems, interpreting ambiguous intent, and executing a multi-step resolution. Second: how does accuracy change as query complexity or document length increases?

Vendors who have measured this share the data. Those who haven't probably won't like what they'd find. Push for production numbers, not benchmark results from controlled conditions.

Standard templates were built around a procurement assumption that no longer holds for AI: that you are buying a platform to manage where humans work.

When that assumption breaks down, the template starts rewarding the wrong answers. A vendor with sophisticated deflection capabilities and a polished omnichannel interface scores well on a traditional template.

A vendor that autonomously resolves 70% of complex insurance claims with a full audit trail may score worse because its answers don't fit the template's categories. The fix is not a better template. It is a different set of questions, starting with what the system actually does when no human is involved.

Shadow mode before live deployment. A well-structured proof of concept lets the AI observe real traffic, suggest responses, and demonstrate performance before it handles anything autonomously.

That gives you production evidence without customer exposure and tells you far more than a controlled demo using pre-selected scenarios. Define success criteria before the POC starts: not "does it look good" but specific resolution rate thresholds, accuracy benchmarks on your case types, and compliance requirements for audit trails.

Autonomous AI support agent for Execs ready to turn the CS grind into a competitive edge.

30% of tickets autonomously resolved within 90 days.

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.jpg)

.png)

.jpg)