How to Scale Good Judgment Without Turning It Into a Macro

.png)

Stay ahead in support AI

Get our newest articles and field notes on autonomous support.

Templates, Tickets, and the Agent Brain

The best human agents don't read from scripts. They internalize frameworks - mental shapes for the common case - and then adapt when the real situation doesn't match the shape. That gap between script and framework is the entire challenge of AI agent design.

Templates get a bad reputation because most of them are macros. “If issue = X, reply = Y.” Brittle by construction. Useless the moment a customer writes something the macro didn’t anticipate - which is constantly. When designed correctly, templates aren’t constraints on judgment. They’re the architecture for it.

That’s the difference between scaling bureaucracy and scaling intelligence.

Templates Are How Humans Think (Quickly)

Templates exist because humans invented them as cognitive shortcuts under pressure. When a claims adjuster opens a new file, they don’t reason from first principles. They run a familiar pattern: identify the claim type, pull relevant policy sections, check for red flags, gather supporting documents, apply the correct rules. That pattern is a template.

Good templates do several things simultaneously: they make the judgment of experienced people portable, they reduce decision fatigue during high-volume periods, and they give teams a shared artifact to review and improve. “How we handle time-demand letters” stops being institutional memory trapped in one person’s head.

They fail for a predictable reason: they get treated like macros. Nuance gets stripped out. What was a judgment framework becomes a rigid checklist. Then the first edge case arrives and the system fails at exactly the moment it’s needed most.

Why Templates Matter Inside a Company

In a fast-moving company, templates remove unnecessary friction. They build faster (no reinventing structure every sprint), share knowledge (best practices become portable instead of trapped in Slack threads), create a common language for reviewing quality, and enable benchmarking against a defined standard.

But templates only stay useful if they remain alive. The moment a template becomes rigid bureaucracy, people route around it. That is the tension: structure that accelerates, without structure that suffocates.

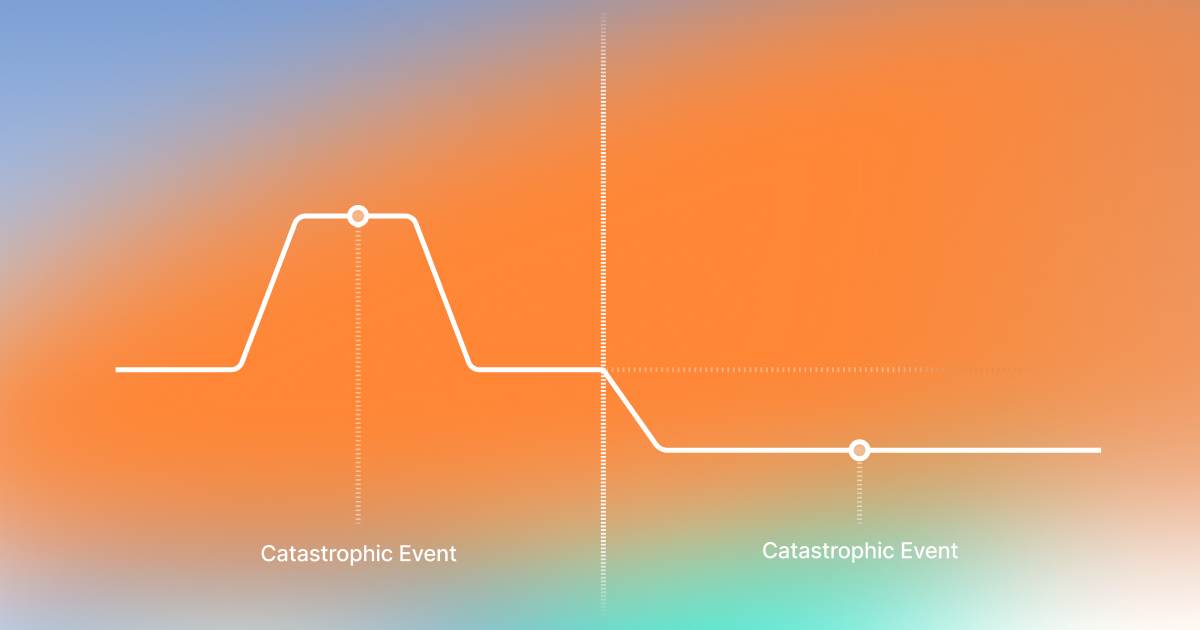

The Agent Resolution Loop

Before building templates, you need a stable framework for what good resolution looks like. Not per-vertical. Not per-workflow. The underlying loop that any trained agent - human or AI - runs through to solve a problem correctly.

We call this the Agent Resolution Loop. Five stages:

- Understand: what happened, what the customer needs, what context is missing

- Gather: pull account data, policy terms, prior interactions, supporting documents

- Choose: identify the smallest set of actions that resolves the issue within policy constraints

- Execute: take those actions, communicate clearly, within authorized boundaries

- Confirm: verify the resolution landed, set follow-up expectations, log what was learned

This loop doesn’t change by vertical. The same five stages apply to a retail return, an insurance FNOL, and a financial transaction dispute. What changes is what the agent does at each stage.

What a Modern AI Template Actually Is

A modern AI agent template is not a script. It’s a guiding schema: a structured set of instructions that communicate purpose, constraints, information requirements, tool usage, and desired output - in that order.

A macro tells the agent what to say. A schema tells the agent how to think. The former collapses when the real case deviates from the expected one. The latter gives the reasoning structure to handle variance without breaking.

The test: hand the template to a strong human agent. Does it make them faster and more consistent? Or does it micromanage them into frustration? If it would empower a human, it will work for the model.

.png)

The Five Steps That Make Templates Production-Ready

A template isn’t just text. In production, it orchestrates five distinct steps, each with a specific job.

Step 1: Logic

The reasoning structure - order of operations, decision points, escalation triggers. Not “say this sentence,” but “if the account shows an open compliance flag, follow escalation path B before proceeding with any resolution offer.”

Step 2: Guardrails

What the agent must not do, regardless of what the customer requests. Regulatory constraints, commitment limits, topics requiring human review. In insurance: never speculate on coverage interpretation, never promise approval timelines, follow jurisdiction-specific disclosure language.

Notch implements guardrails as a five-layer stack: LLM-as-judge, technical defenses, access limits, business limits, and geo limits - each enforced independently so no single failure exposes the system.

Step 3: Data Connectors

Language alone doesn’t resolve tickets. The agent needs live context: claims system status, policy terms, billing history, adjuster notes. The template specifies which systems are authoritative, in what order to query them, and how to reconcile conflicts across sources.

Step 4: Deterministic Rules

Some decisions must not be probabilistic. Refund eligibility thresholds, coverage limits, regulatory response windows, routing logic - these are computed, not inferred. The template specifies which decisions require deterministic validation and what happens when the check fails.

Step 5: Insight Extraction

Every interaction produces structured output beyond the resolution itself: root cause category, policy friction point, resolution path taken, escalation trigger if any, and gaps in the knowledge base. This layer transforms a closed ticket into an operational signal - making every interaction a learning event, not just a transaction.

Together, these five layers produce structured workflow execution with deterministic validation and configurable guardrails - the architecture that makes AI agents deployable in regulated environments, not just convincing in demos.

Templates for Insights: Designing the Output, Not Just the Process

Most implementations stop at closure. Did the ticket close? That’s insufficient instrumentation.

Well-designed templates treat insight extraction as a required output. At the end of every interaction, the template instructs the agent to produce structured data: root cause category, policy friction point, resolution path taken, escalation trigger (if any), and whether the case exposed a gap in the knowledge base.

When 50,000 tickets run through a template that extracts this data, operations teams can see which claim types generate the most escalations, which policy clauses cause the most confusion, and where the AI is hitting the limits of its scope. That feedback loop makes the system self-improving.

Three Real-World Examples

Insurance: Time-Demand Detection

A large U.S. carrier receives millions of documents annually - attorney packets, medical records, repair invoices - initially misclassified as general correspondence. Some contain time-demand letters with 10 or 15-day response windows. Missing them carries regulatory and legal exposure.

The template runs the Agent Resolution Loop: classify hierarchically, extract deadlines with precision on working-day calculations, generate a structured adjuster summary, escalate immediately if the window is within 48 hours. Guardrails ensure the agent never speculates on coverage; deterministic rules handle eligibility logic. Insight output captures claim type, blockage category, and escalation trigger.

Retail: Damage and Urgency

A customer reports a damaged shipment needed for a time-sensitive event. The template confirms damage via structured intake, checks inventory and expedited shipping through a live connector, and presents ranked resolution options with tradeoffs stated explicitly. Guardrails prevent the agent from promising delivery windows it cannot guarantee. Insight output captures damage type, fulfillment failure category, and resolution path chosen.

Finance: Transaction Rejection

A customer’s transfer was rejected and they don’t understand why. The template queries transaction status and rejection codes, identifies the remediation path (KYC mismatch, limit exceeded, routing error), and walks the customer through the minimum required correction - without collecting unnecessary sensitive data. Guardrails enforce compliant phrasing and risk-based escalation. Insight output logs the rejection reason and flags systemic UX patterns for upstream review.

Key Takeaway

Templates are not macros. The most powerful templates encode how to think and what to extract, layered on top of a stable resolution loop, so AI agents can resolve issues safely using five production-grade layers - logic, guardrails, data connectors, deterministic rules, and insight extraction - while continuously producing structured signals that make the system smarter over time.

At Notch, we’ve built this approach into the product itself. Templates are not static scripts pasted into an agent - they’re reusable operational blueprints that package SOPs, configuration, guardrails, data access, and insight extraction into a starting point CX teams can actually use. That means non-technical teams can launch faster with proven structures, then continuously adapt them as real conversations reveal new patterns, failure modes, and optimization opportunities. Instead of filing engineering tickets for every workflow change, teams can refine how the agent reasons, what it checks, and what it outputs - making iteration part of day-to-day operations rather than a separate technical project.

See Notch in action and explore how teams use templates to launch, refine, and scale AI agents with better judgment from day one.

Key Takeaways

Got Questions? We’ve Got Answers

Track autonomous resolution rate for in-scope cases, escalation rate and reason, time-to-resolution, and re-contact rate. Re-contact rate is the honest signal. Resolution, not deflection.

See how Notch runs the Agent Resolution Loop in production

The escalation trigger in the Logic layer fires. Well-designed templates define their own edges: when no resolution path fits, when a deterministic check fails, or when confidence falls below a threshold, the template routes to a human with full structured context attached.

Yes. Templates are versioned schema files, independent of the underlying model. A change to guardrail thresholds or a new data connector is a configuration update to the template layer - not a model retrain.

A chatbot script maps inputs to outputs: if the user says X, respond Y. An AI agent template maps a reasoning structure: what information to gather, what constraints to enforce, what tools to use, and what resolution looks like. Templates handle variance. Scripts break in it.

Certain decisions - eligibility thresholds, regulatory windows, routing logic - must not be probabilistic. The template specifies which decisions require deterministic validation. When those rules run, they produce a binary outcome the AI cannot override. That’s the architecture that makes agents deployable in compliance-sensitive environments.

Autonomous AI support agent for Execs ready to turn the CS grind into a competitive edge.

30% of tickets autonomously resolved within 90 days.

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.jpg)

.png)

.jpg)