11 Questions Every Insurance CIO Should Be Asking About AI Agents

Stay ahead in support AI

Get our newest articles and field notes on autonomous support.

What came up at an industry panel and a CIO workshop in New York - and why the answers matter more than the technology.

The same questions kept surfacing across two recent industry events - a panel on autonomous customer support in insurance, and a CIO workshop in New York focused on the NAIC AI pilot and what it means for carrier operations.

None of the questions were about whether to adopt AI. That debate is over. The questions were about how to adopt it without creating a governance problem, a trust problem, or a regulatory problem you can't unwind. Here are eleven of them.

Part I: From Automation to Autonomy

From an industry panel on rethinking operations flow, customer support in insurance. Elool Jacoby, CPO at Notch, fielded audience questions. And here are the top questions and best practices for deploying AI agents in regulated industries you should know:

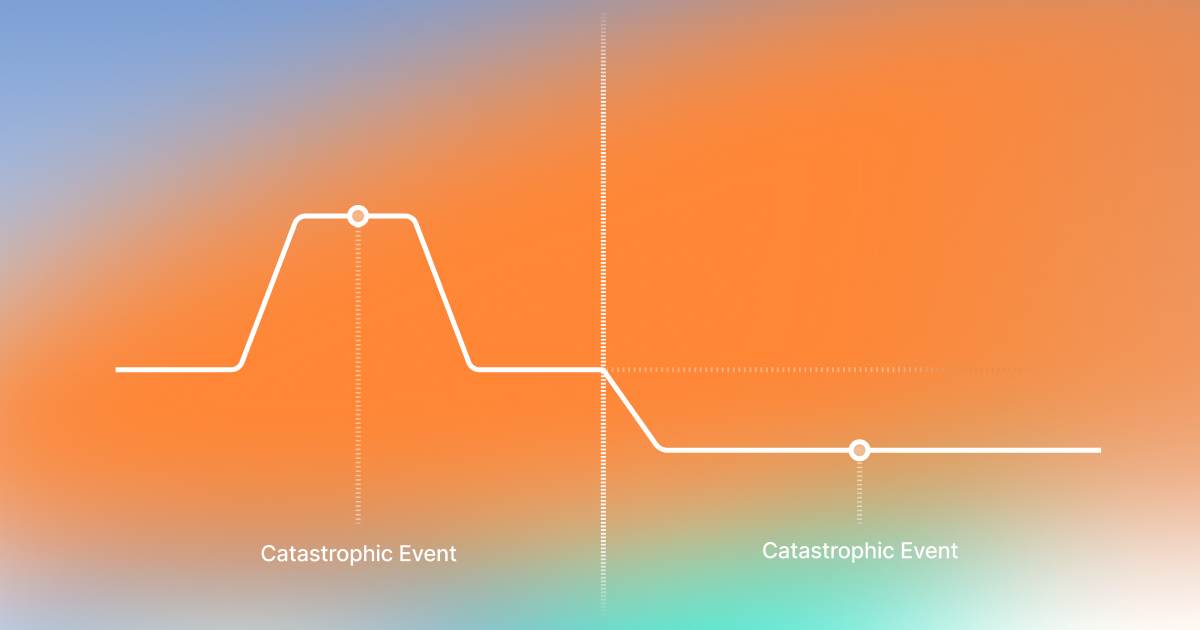

"The insurance industry has experimented with automation for years, often with mixed results. What actually distinguishes autonomous AI from earlier generations of automation - and why is insurance only now ready for this shift?"

"Older automation was really about having different silos in a company, and you'd build these rigid automation routines - RPA and then ML to handle specific tasks. But autonomous AI is more like adding a new kind of employee who can think across different parts of the organization. We're not just automating a single repetitive task. We're enabling a system that handles more complex flows and interacts fluidly across different areas.

The insurance industry is ready for this now because the technology is mature enough to handle these complex, cross-silo interactions. It's less about just automating tasks and more about enabling a truly autonomous system that can adapt and make decisions."

"Insurance interactions happen at moments of stress - claims, disputes, financial uncertainty. How do you think about designing AI agents that can earn trust and demonstrate empathy in those situations?"

"It's all about making sure the AI really understands the emotional context. Insurance situations are often stressful, and an AI that can respond in a calm, reassuring way - and know when to hand off to a human if needed - that's key.

AI can actually excel at being consistent with regulatory language and staying patient no matter how complex the issue is. It doesn't have a bad Monday morning. But humans should definitely stay in the loop for those nuanced, empathetic touches and for final quality checks."

"And where do you think AI agents can genuinely outperform human agents?"

"Consistency. A human agent might handle the same endorsement correctly 85% of the time. The AI handles it correctly 100% of the time - or it escalates. There's no variance between a Monday morning agent and a Friday afternoon agent. Same regulatory language, same patience, same quality standard at 2 AM as at 10 AM.

But humans should remain in the loop for crisis situations that require genuine empathy, for the final judgment calls on high-stakes decisions, and for understanding context beyond what's in the system."

"Insurance support isn't one job - it's claims handling, policy servicing, renewals, regulatory communication. How important is domain-specific specialization, and what risks do you see in treating insurance support as a single workflow?"

"In these heavily regulated industries, you can't just rely on a one-size-fits-all approach. Using a single prompt or a general model isn't robust enough for all the complexity. And training one big model over and over isn't always agile enough either.

Instead, we're talking about having a more modular or 'agentic' workflow where you break down tasks into smaller specialized pieces. That way, the AI can be really good at each specific part of the insurance process - claims, policy updates, COI issuance - without oversimplifying things. A claims FNOL flow and a billing inquiry have entirely different data requirements, compliance constraints, and resolution paths."

"As AI agents move from answering questions to making decisions, how should insurance companies think about responsibility, explainability, and governance?"

"Our approach is three parts. First, start narrow and focus deeply on one use case to build trust and expertise. Second, make sure the AI isn't a black box - you have clear reasoning and visibility into each decision step. And third, keep a human in the loop at the start to approve actions and ensure everything is governed properly.

All of that together helps build confidence and lets you expand responsibly. The carriers that skip steps - that try to deploy across five workflows simultaneously without governance infrastructure - those are the ones stuck in 18-month pilots that never reach production."

"Beyond response time and cost reduction, what metrics do you believe actually reflect a high-quality customer experience in AI-led insurance support?"

"A lot of traditional metrics - CSAT, first response time - aren't really the best indicators anymore. CSAT can be influenced by things like company policies rather than the actual quality of the AI interaction. And first response time, which is always under 5-10 seconds compared to human agents - that's not interesting anymore.

Instead, we're building toward an 'QA score agent' that focuses on whether the customer truly got what they needed and if the AI delivered the right resolution. It's more about the quality and relevance of the outcome rather than just speed or a generic satisfaction metric."

"Can you break down what goes into that agent score?"

"In addition to whether the customer got what they needed, we're measuring whether the AI used the right knowledge, selected the correct data, and applied the correct classification to arrive at that solution. It's not just about the end result, but about how accurately and appropriately the AI got there.

That gives you a much fuller picture of quality. You're measuring the reasoning, not just the customer's mood after the interaction."

"Insurance is full of exceptions and edge cases. How do you approach continuous learning in autonomous systems without compromising consistency, compliance, or customer trust?"

"Start narrow and really understand one use case deeply so you know exactly what you need to handle and where the edge cases are. When you hit something the AI doesn't know, you pass it to a human agent and then learn from that interaction to refine your system over time.

We've also got a feedback framework where either AI or human QA can review if the right knowledge, data, and tone were used. This helps you continuously improve and keep everything consistent and compliant. The key is that improvement happens through governed feedback loops, not through the model drifting on its own."

"If autonomous AI becomes the default layer for insurance customer support, what role do you see humans playing in the future - and how should organizations prepare their teams for that transition?"

"We're seeing a future where humans are more like the operational leaders on top of the AI agents. They'll be the ones managing knowledge, owning SOPs, and making sure the AI is working with the right guidelines. There are also roles for QA, where experienced human agents give feedback and train the AI, and even some kind of AI agent managers who bridge the gap between the company's strategy and the automation process.

Humans won't disappear - they'll just be in roles that guide and enhance what the AI does. That's a different skill set, and organizations need to start preparing for that now, not after the deployment is live."

"Traditional fraud detection relies heavily on human intuition - people who notice something feels off about a claim. As AI handles more first-touch interactions, what gets lost in fraud detection capability, and what new signals become possible?"

"We're focusing on building a system that sets clear limitations and guardrails on what the AI can do. You limit certain tasks to one time per user, you have escalation points where a human steps in if something looks off, and you have AI that can detect potential prompt engineering or suspicious patterns.

While you might lose a bit of that human intuition, you gain new capabilities that allow you to spot fraud in ways humans might not."

"Can you give an example?"

"When you have a single AI agent acting as a consistent layer, it actually becomes easier to detect fraud patterns that might slip through when different human agents are handling each interaction. Because the AI supervisor can look at the overall patterns, it can spot if someone is trying the same scam with multiple agents.

Having that centralized AI oversight can actually help you detect fraud more consistently and understand escalation patterns better. You lose the gut feeling but gain the bird's-eye view."

Part II: Governance and the NAIC AI Pilot

From a CIO workshop in New York. The discussion shifted from capabilities to controls - how carriers should prepare for the regulatory reality that's already here.

What should carriers be doing right now in response to the NAIC AI pilot?

The most important mindset shift is that this is no longer just an innovation conversation. It's becoming an operating model conversation.

The NAIC pilot is effectively asking carriers: can you show us where AI is being used, which use cases are higher risk, how those systems are governed, what data they rely on, and what evidence you have that the controls actually work? The right response isn't to slow down AI adoption. It's to make AI more governable.

That means three things. First, a real inventory of AI systems across underwriting, claims, servicing, fraud, and operations. Second, a governance model that assigns ownership, defines escalation paths, and distinguishes lower-risk from higher-risk use cases. Third - and this is where most carriers are weakest - evidence. Not policy documents. Evidence. Regulators will care much less about what your standards say, and much more about whether you can show audit trails, testing records, model documentation, and decision traceability.

The carriers that will win are the ones that operationalize trust. They won't treat governance as a brake on AI. They'll treat it as the infrastructure that lets them scale AI safely.

A lot of carriers worry that AI is still a black box. How do you solve that?

That concern is completely valid. In insurance, a black box isn't just a technical problem. It's a trust problem, an operational problem, and potentially a regulatory problem.

What carriers need isn't vague explainability. They need workflow-level traceability. They need to know what information came in, how it was interpreted, what action was recommended or taken, what business rule applied, whether a human overrode it, and how to trace that back later when a regulator, auditor, or internal compliance team asks questions.

That's exactly how we think about Notch. We call it a glass box. We're not a mysterious model sitting off to the side. We're built around explainable execution. In practice, that means full audit trail, decision log, escalation log, and visibility into the sequence of actions taken in a workflow. Carriers can keep human oversight where they want it and configure the business rules and guardrails around what the AI is allowed to do.

The deeper point: in regulated industries, explainability isn't a UX feature. It's part of the control environment. If you can't reconstruct why a decision was made, you don't have production-grade AI. You have automation theater.

How do you balance innovation with compliance? Doesn't more governance slow everything down?

The opposite is true. Weak governance slows AI down far more than strong governance does.

What slows a carrier down is when every new AI use case turns into a debate about risk, ownership, data provenance, customer impact, and who is accountable if something goes wrong. If those questions are unresolved, every deployment becomes bespoke and painful.

The way to move faster is to standardize the control layer. Clear policies, clear approval logic, strong auditability, identity and permissions controls, and a reliable way to separate recommendation from execution when needed. Once those foundations are in place, carriers can expand use cases much more confidently.

That's one of the reasons we built Notch the way we did. We work inside regulated workflows, not outside them. We adapt to enterprise identity systems, enforce authentication and scoped permissions for sensitive actions, and maintain auditability across the workflow. The goal isn't just to automate one task. The goal is to give carriers a governed way to move from pilot mode to production mode.

Insurance doesn't need more AI demos. It needs more systems that can survive scrutiny. The companies that figure that out first are the ones that will capture the real value.

What This Comes Down To

The future question isn't whether insurers will use AI. They will. The question is which carriers will use AI in a way that is explainable, governable, and regulator-ready.

For years, the industry treated AI as a capability question: can it automate, classify, predict, answer? The NAIC pilot signals the next phase is a control question: can you oversee it, evidence it, and stand behind it?

Carriers that figure this out first - who build the governance infrastructure alongside the AI infrastructure - are the ones that will deploy faster, expand use cases more confidently, and capture the real operational value.

See how Notch agents execute insurance workflows with full traceability and governed autonomy - book a demo.

Key Takeaways

Got Questions? We’ve Got Answers

Autonomous AI support agent for Execs ready to turn the CS grind into a competitive edge.

30% of tickets autonomously resolved within 90 days.

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.jpg)

.png)

.jpg)