Conversational AI in Insurance: From Chatbot to Autonomous Agent

Stay ahead in support AI

Get our newest articles and field notes on autonomous support.

Most insurance carriers are past the question of whether to deploy conversational AI. They already did. A chatbot on the website. An IVR that tries to parse intent before routing to a human. Maybe an internal build that handles a handful of FAQ-level queries.

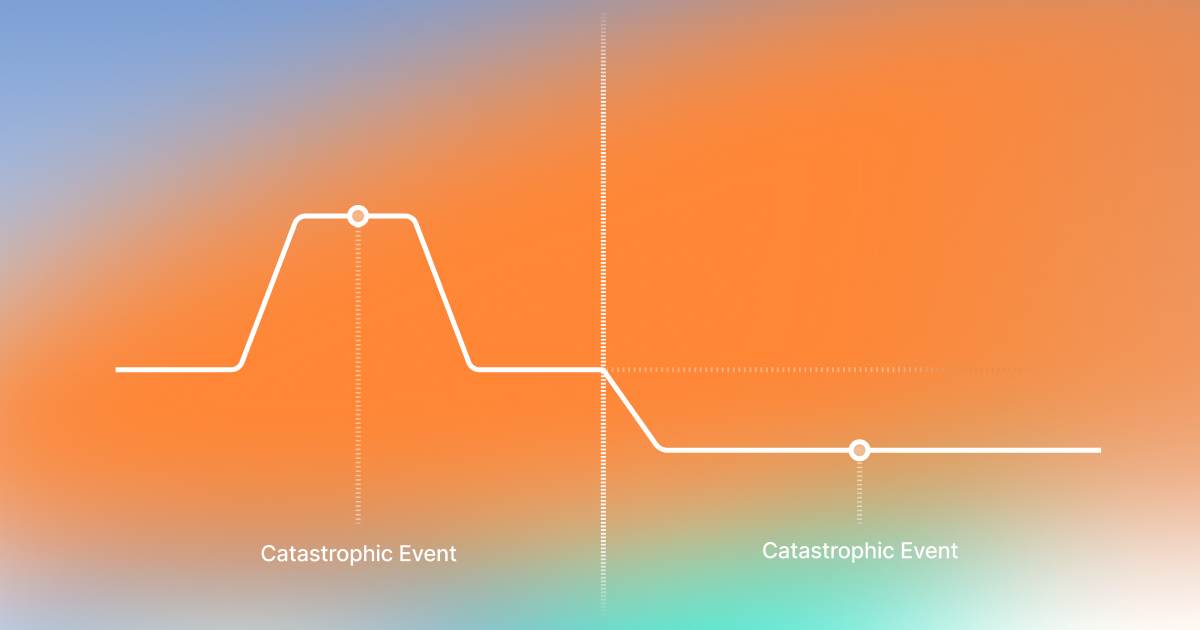

The results were underwhelming. Not because the technology failed, but because it solved the wrong problem. These systems deflect. They recognize what the customer wants, surface a link or a form, and call it handled. The metric that gets reported is containment rate - how many people didn't reach a live agent. What doesn't get reported: how many called back the next day because nothing actually happened.

The carriers we talk to today are not asking "should we use AI?" They're asking a harder question: why didn't our first AI investment impact the bottom line? The answer, almost every time, is the same. They automated the easy parts. The parts that sound like quick wins but don't reduce headcount, don't cut cycle time, and don't change the economics of the operation. And now they're stuck with a technology that can't go further.

Conversational AI in insurance is entering a different phase. The question is no longer whether AI can talk to a policyholder. It's whether the AI can take the interaction from start to finish - pull the right data, apply the right rules, execute the right action, confirm the outcome - and actually move the numbers that matter.

What Conversational AI Actually Means in Insurance

The term covers a wide range of capability. At one end: a keyword-matching chatbot that recognizes "file a claim" and opens a web form. At the other: an autonomous agent that conducts a structured FNOL intake, validates policy coverage, extracts loss details, creates the claim in the system of record, and confirms next steps - all in a single conversation.

These are not incremental improvements on the same technology. They are fundamentally different architectures.

The first generation was built on intent classification. Detect what the caller wants, route them to the right queue, maybe pre-fill some fields. This is deflection infrastructure. It makes the call center slightly more efficient, but the resolution still requires a human.

The current generation is built on agentic execution. The AI doesn't just understand the request - it executes the workflow. It queries policy systems, applies business rules, checks compliance constraints, takes action, and confirms the result. The human reviews exceptions, not every interaction.

But here is the distinction most buyers miss: the value is not in the agent. It's in the orchestration layer the agent runs on. If you invested in a conversational AI point solution - even a good one - you bought the agent without the operating system. You can't swap models, you can't add new workflows, you can't extend from voice to documents to back-office without rebuilding. The companies that focused on the orchestration layer can do all of that, because every agent is a module on top of a platform that was designed to evolve.

The Three Mistakes Carriers Keep Making

After working with carriers across multiple deployments, the same patterns show up.

1. Starting with the easy parts

It sounds rational: pick the simplest use case, prove the technology, expand later. The problem is that the simplest use cases - FAQ deflection, password resets, basic policy lookups - don't impact the bottom line. They sound like quick wins in a business case, but they don't reduce headcount, they don't change the cost structure, and they don't demonstrate the kind of value that justifies scaling.

Then you're stuck. The technology proved it can handle easy work. Leadership asks: what else can it do? The answer is usually: not much, because the system wasn't built for the hard parts. The architecture that deflects FAQ queries cannot conduct a structured FNOL intake, validate coverage in real time, or execute a multi-step servicing workflow.

Start where the impact is. If the first deployment doesn't touch a workflow that currently requires human labor at scale, it won't build the case for the second deployment.

2. Selecting technology that can't evolve

AI is moving fast. The model you deploy today will not be the best model available in six months. The carrier that locked into a vendor's proprietary model layer, or a single LLM provider, is already behind.

Production-grade platforms must support model swapping - the ability to replace or combine LLMs as the landscape evolves. They must support new technology integration without re-architecture. And they must do this without disrupting live workflows. If upgrading a model requires a new deployment cycle, the technology is not agile enough for this market.

3. Focusing on the agent instead of the orchestration

This is the most common architectural mistake. A carrier evaluates conversational AI, selects a vendor with a strong agent layer - good NLU, natural conversation, solid voice quality - and deploys it. It works for that one workflow.

Then they need a second workflow. And a third. And a document processing pipeline. And a back-office automation. Each one requires a separate integration, a separate model, a separate set of business rules. There is no shared orchestration layer connecting them.

The right architecture is an AI operating system - an orchestration layer that manages agents, guardrails, business rules, data connectors, and compliance frameworks as a unified platform. Individual agents are modules that plug in. When you build on the orchestration layer, every new workflow inherits the existing guardrails, integrations, and compliance architecture. When you build on the agent, every new workflow starts from zero.

FNOL Is Not a Front-Office Workflow

This is the reframe that changes the conversation.

For carriers and TPAs, FNOL is not a customer service function. It is the first irreversible decision point in the claims lifecycle. Every data error, ambiguity, or omission introduced at intake permanently distorts what comes after: loss severity estimates, reserve accuracy, leakage rates, cycle time, litigation probability, and downstream automation rates.

You cannot fix claims economics downstream if intake is fragmented, stateless, or siloed.

This is why treating FNOL as a chatbot use case is a structural mistake. A chatbot collects data and passes it to a human. An autonomous FNOL agent operates as a persistent, policy-aware control plane - it validates coverage in real time, classifies severity, applies jurisdiction-specific rules, creates the claim record with structured data, routes to the correct adjuster queue, and sets the entire downstream process on the right trajectory.

When FNOL runs on an agentic AI operating system rather than a point solution, the intake agent shares context, guardrails, and business rules with every other agent in the operation. The COI agent knows the same policy data. The document triage agent applies the same classification hierarchy. The broker servicing agent accesses the same coverage validation. Nothing is siloed.

The result is not incremental efficiency. It is structural improvement to expense ratio, loss ratio, and automation ceilings across the claims lifecycle.

Five Workflows Where Autonomous Resolution Is Already in Production

1. FNOL Claims Intake

The AI conducts a structured interview that adapts by claim type - auto accident, property damage, bodily injury each follow different flows with different required disclosures by state. During the conversation, the agent validates identity against the policy system, confirms active coverage, extracts all loss details in structured form, classifies by type and severity, creates the claim record, and routes to the correct adjuster queue.

When the call ends, the claim exists in the system. No human re-entered anything. No callback needed.

2. Policy Servicing

Address changes, vehicle additions, payment inquiries, coverage questions, ID card requests. High-volume, low-complexity interactions that consume CSR time without requiring judgment. The autonomous agent handles authentication, pulls current policy state, executes the change in the policy administration system, and confirms. Changes requiring underwriting review get flagged and routed with full context.

3. Certificate of Insurance (COI) Issuance

Brokers and policyholders request COIs constantly. Each requires verifying the policy, confirming certificate holder details, generating the document, and delivering it. Structured input, deterministic validation, system-level execution. What used to be a 24-48 hour turnaround happens in minutes.

4. Broker Servicing

Brokers need policy status across multiple accounts, commission inquiries, endorsement requests, renewal coordination. The AI agent acts as a real-time interface to the carrier's systems - pulling data across policies, answering coverage questions with cited policy language, and executing servicing requests that would otherwise sit in a queue.

5. Claims Document Triage

Not a phone call, but the same agentic architecture applied to operations. Incoming document packets - attorney demands, medical records, repair estimates - are ingested, classified hierarchically, and triaged by urgency. Time-demand letters with 10-day response windows get flagged within minutes of receipt. Structured data is extracted for reserve workflows and routed to the correct adjuster with a prioritized summary.

This is the back-office side of conversational AI, and it is where the FNOL reframe matters most. When intake and document triage run on the same orchestration layer, the claim file is coherent from the first phone call through every subsequent document.

The Compliance Architecture

Insurance is not a forgiving environment for AI mistakes. A wrong coverage statement creates E&O exposure. A missed regulatory disclosure triggers compliance violations. An unauthorized action on a policy generates audit findings.

Conversational AI that works in insurance production runs on a five-layer guardrail architecture:

Layer A - LLM-as-judge guardrails: AI evaluators that continuously monitor the conversation. Pre-built detectors catch stuck loops, frustration, and sensitive topics. Organization-specific judges enforce carrier-level rules.

Layer B - Technical defenses: prompt injection, instruction smuggling, tool abuse. Architectural, not configurable.

Layer C - Access limits: what this user is allowed to do, given their authentication and verification status.

Layer D - Business limits: hard caps on actions - per-transaction limits, rolling counters, mandatory human review above thresholds.

Layer E - Jurisdiction limits: state DOI rules, GDPR, cross-border data restrictions. The same intake flow may require different disclosures in California vs. Texas.

All five layers run independently. A failure in one does not cascade. Every decision is logged with full traceability.

This is the guardrail stack that makes the orchestration layer viable for regulated industries. Without it, an AI operating system is just a workflow engine. With it, it's a governed platform that carriers can trust in production.

The Numbers in Production

Across 20 million claims FNOL intake conversations processed:

- 70-73% autonomous resolution rate - not containment, not deflection, but end-to-end completion

- 6x faster median handling time vs. human baseline

- 15-20% improvement in CSAT/NPS compared to human agents

- 33% reduction in repeat callers

- 200% ROI within 12 months of deployment

- 3-6 weeks from contract to production

The deployment timeline matters. In-house builds take 12-18 months. A platform approach with a managed service team compresses that to weeks - because the orchestration layer, guardrails, and compliance framework already exist. The carrier configures, not engineers.

How to Evaluate Conversational AI for Insurance

Seven questions that separate production systems from demo systems:

1. What is the autonomous resolution rate on real production traffic - not containment, not intent accuracy?

2. Is this an agent, or an orchestration platform that agents run on? Can I add workflows without rebuilding?

3. Can I swap or combine LLMs without a new deployment? What happens when a better model ships next quarter?

4. How does the system handle multi-state compliance? Can I configure jurisdiction-specific rules without engineering?

5. Show me the escalation path. What context transfers to the human?

6. What is the actual deployment timeline - not a pilot, production volume?

7. What does the audit trail look like for a single interaction?

The answers tell you whether you're looking at a chatbot that got smarter, or a platform that was built for regulated operations.

Key Takeaways

Got Questions? We’ve Got Answers

A chatbot recognizes intent and routes. Conversational AI executes the workflow end-to-end - authenticates, queries systems, applies rules, takes action, confirms the result. The difference is whether the customer's issue is resolved or just acknowledged. But the deeper difference is architectural: a chatbot is a point solution. Conversational AI built on an orchestration layer is a platform that scales across workflows.

Because simple queries don't impact the bottom line. If your AI handles FAQ deflection and basic lookups, it's doing the work that didn't require expensive humans in the first place. The economics change when AI handles FNOL intake, policy servicing, COI issuance, broker workflows - the interactions that currently require trained staff at scale. If your first deployment didn't reduce headcount or cycle time, it solved the wrong problem.

AI is increasingly capable of handling the full claims adjudication process. Modern systems have moved from simple automation to agentic AI that can reason, interpret unstructured data, and make decisions in real time. Fully autonomous processing is already a reality for routine claims. For complex cases, AI accelerates the adjuster's work - document review, policy validation, data extraction - while the human focuses on judgment calls. The degree of autonomy depends on the company's needs and regulatory rules. The technology can run fully automated end-to-end, but you configure where a human stays in the loop - by claim type, severity, dollar amount, or jurisdiction.

Platform-based deployments with managed service teams average 3-6 weeks from contract to production. In-house builds typically take 12-18 months. The difference is whether you're building the infrastructure or configuring a platform that was built for regulated industries.

Evaluate what you have against three criteria: can it execute workflows end-to-end (not just deflect)? Can it swap models as the technology evolves? Can it extend to new workflows without rebuilding integrations? If the answer to any of these is no, you invested in an agent without an orchestration layer - and you'll hit a ceiling on what it can do for the business.

Book a demo to see how Notch handles FNOL intake, policy servicing, and broker workflows on a single orchestration platform.

Autonomous AI support agent for Execs ready to turn the CS grind into a competitive edge.

30% of tickets autonomously resolved within 90 days.

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.jpg)

.png)

.jpg)